With the release of Sitecore 9.3, there is a lot of hype in the community around the new bells and whistles that the updated version of the platform offers.

What I am most excited about are the huge performance and stability improvements in xConnect and xDB, and in this post, I will highlight those key areas.

If you have a lot of interaction data, rebuilds can take a very long time!

If you have a hiccup during the process, there is finally an option to resume without having to start from scratch! A major feature to say the least.

With the 9.3 Database Deployment Tool, we finally have the ability to add and remove shards to the xDB collection store. Adding is so important, as I mentioned in my previous post.

To summarize, additional collection shards means additional SQL compute capacity to serve incoming queries in the distributed configuration, and thus faster query response times and index builds. Additional shards will increase total cluster storage capacity, speed up processing, and offer higher availability at a much lower cost than vertical scaling.

You will be required to run an upgrade tool that will modify your pre 9.3 collection databases to allow for the ability to make these adds and removes to your shards.

There have been a lot of issues in previous versions of 9.x that required patches and SQL database scripts to resolve. See my post on Critical Patches for 9.1.

In 9.3, this Reference Data layer has been completely redesigned from the ground up, with focus the main focus being on performance.

Sitecore has added a new TVP provider in 9.3, that provides major performance improvements.

With 9.3, you have the ability to switch performance counters off and on for xConnect, xDB and Cortex services.

What I am most excited about are the huge performance and stability improvements in xConnect and xDB, and in this post, I will highlight those key areas.

Search Indexer Rebuild Enhancements

Performance

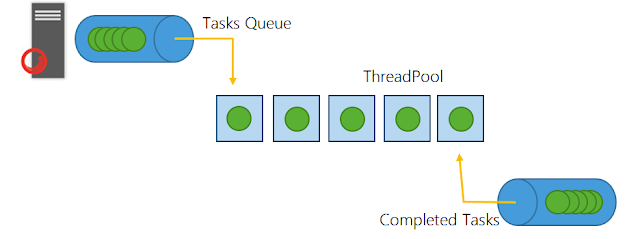

Sitecore made some very important improvements to the stability of rebuilds in systems under stress scenarios, allowing for faster rebuilds:- Implemented batching and parallelization during the rebuild synchronization stage

- Reduced the default SplitRecordsThreshold from 25,000 to 1,000

- Reduced the ParallelizationDegree of the rebuild to half

- The sync stage of rebuild can now finish if there is an indexing cycle where less than 25k of changes are detected.

New Commands

- Check status of rebuild: -rebuildmonitor / -rm

- Before you could just check logs for the completion entires

- Delete obsolete data from index: -cleanuprebuildindex / -cri

Rebuild Resume

If you have a lot of interaction data, rebuilds can take a very long time!If you have a hiccup during the process, there is finally an option to resume without having to start from scratch! A major feature to say the least.

Sharding Deployment Tool Enhancements

With the 9.3 Database Deployment Tool, we finally have the ability to add and remove shards to the xDB collection store. Adding is so important, as I mentioned in my previous post.To summarize, additional collection shards means additional SQL compute capacity to serve incoming queries in the distributed configuration, and thus faster query response times and index builds. Additional shards will increase total cluster storage capacity, speed up processing, and offer higher availability at a much lower cost than vertical scaling.

You will be required to run an upgrade tool that will modify your pre 9.3 collection databases to allow for the ability to make these adds and removes to your shards.

Web Tracker Stability

The following improvements have been made to the Web Tracker's stability and performance:- Session expiration batching

- Optimization of the Web Tracker and xConnect communication

- Optimization of the Web Tracker and Reference Data Service communication

Reference Data Layer Redesign

There have been a lot of issues in previous versions of 9.x that required patches and SQL database scripts to resolve. See my post on Critical Patches for 9.1.In 9.3, this Reference Data layer has been completely redesigned from the ground up, with focus the main focus being on performance.

New TVP Provider

Sitecore uses table-valued parameters (TVP) to send aggregated information to SQL, because this is a very fast way to communicate with SQL server. Basically, you can use table-valued parameters to send multiple rows of data to a function or stored procedure without the overhead of creating a temporary table or using tons of parameters.Sitecore has added a new TVP provider in 9.3, that provides major performance improvements.

Ability to Turn On and Off Performance Counters in Azure

If you are deploying to PaaS, performance counters are now disabled by default just like CMS counters.With 9.3, you have the ability to switch performance counters off and on for xConnect, xDB and Cortex services.