Having performed Sitecore xDB index rebuilds many times with large data sets, I wanted to share some key tips to ensure a successful and speedy rebuild.

My experience has been on Azure PaaS, with both Azure Search and SolrCloud, but these techniques can be applied to on-premise and IaaS as well.

<MinimumLevel>

<Default>Information</Default>

</MinimumLevel>

App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\sc.Xdb.Collection.IndexerSettings.xml file:

<BatchSize>1000</BatchSize>

I have had success reducing this size to 500, as it helps execution go faster and prevents you from hitting timeouts during your rebuild when your shard databases are under heavy load.

Like the Indexer Batch size, this setting is found in the following location:

App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\sc.Xdb.Collection.IndexerSettings.xml

<SplitRecordsThreshold>25000</SplitRecordsThreshold>

I have had success reducing this size to a little less than half the original value, 12000.

You will see this in many of the other configs in your xConnect App Services. This setting determines how many parallel streams of data can be processed at the same time.

This setting is found in this location for Solr:

\App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\ sc.Xdb.Collection.IndexWriter.SOLR.xml

<ParallelizationDegree>4</ParallelizationDegree>

This setting is found in this location for Azure Search:

\App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\ sc.Xdb.Collection.IndexWriter.AzureSearch.xml

<ParallelizationDegree>4</ParallelizationDegree>

I have seen large improvements reducing this value from 4 to 1.

This is critically important for not only your shard databases but also your other Sitecore databases like Core, Master and Web. Marketing Automation and Reference databases also get hammered pretty hard, so make sure that this gets applied and run regularly on these.

Note that this maintenance is 100% necessary. As Grant Killian says: "The expectation that Azure SQL is a 'fully managed solution' is somewhat misleading, as rebuilding query stats and defragmentation are the user’s responsibility."

Sitecore recommends an approach like this: https://techcommunity.microsoft.com/t5/Azure-Database-Support-Blog/Automating-Azure-SQL-DB-index-and-statistics-maintenance-using/ba-p/368974

Schedule an Azure Automation “Runbook” to attend to this after hours for all Sitecore databases.

.\XConnectSearchIndexer.exe -rr

After this, you will see the magic start with docs in your inactive / rebuild index first be reset to 0, and then counts start gradually increasing.

Unfortunately, there is no way to see how far along you are. There is however a query that you can run against your Azure Search or Solr indexes to check the status.

Azure Search Query

$filter=id eq 'xdb-rebuild-status'&$select=id,rebuildstate

Solr Query

id:"xdb-rebuild-status"

Returned Index Rebuild Status:

Default = 0

RebuildRequested = 1

Starting = 2

RebuildingExistingData = 3

RebuildingIncomingChanges = 4

Finishing = 5

Finished = 6

My experience has been on Azure PaaS, with both Azure Search and SolrCloud, but these techniques can be applied to on-premise and IaaS as well.

Change The Log Level

Before starting a large rebuild job, it's important to enable the proper logging in case you need to investigate any issues that may arise. By default, the log level is set to Warning. Change it to Information.

Navigate to the: App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\CoreServices\sc.Serilog.xml file, and change the MinimumLevel to Information:

<Default>Information</Default>

</MinimumLevel>

Optimize Your Indexer Batch Size

Don't be over eager with your indexer's batch size setting. This setting determines how many contacts or interactions are loaded per parallel stream during an index rebuild. This setting is found in the following location:App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\sc.Xdb.Collection.IndexerSettings.xml file:

<BatchSize>1000</BatchSize>

I have had success reducing this size to 500, as it helps execution go faster and prevents you from hitting timeouts during your rebuild when your shard databases are under heavy load.

Reduce Your SplitRecordsThreshold

A good tip from a member of our Sitecore community. By default, this value is set to 25000. Tweaking and reducing this value can also make your rebuilds run faster, and improve your live indexing.Like the Indexer Batch size, this setting is found in the following location:

App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\sc.Xdb.Collection.IndexerSettings.xml

<SplitRecordsThreshold>25000</SplitRecordsThreshold>

I have had success reducing this size to a little less than half the original value, 12000.

Reduce Your Index Writer's ParallelizationDegree

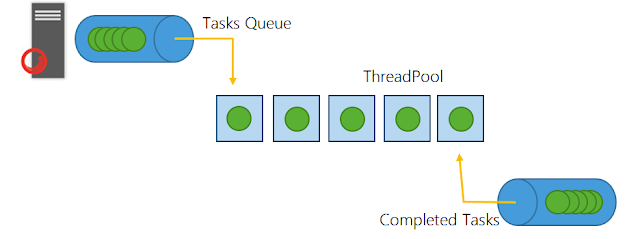

To decrease load in your Search Service, another good suggestion is to decrease the PrallelizationDegree Setting which is 4 by default.You will see this in many of the other configs in your xConnect App Services. This setting determines how many parallel streams of data can be processed at the same time.

This setting is found in this location for Solr:

\App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\ sc.Xdb.Collection.IndexWriter.SOLR.xml

<ParallelizationDegree>4</ParallelizationDegree>

This setting is found in this location for Azure Search:

\App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\ sc.Xdb.Collection.IndexWriter.AzureSearch.xml

<ParallelizationDegree>4</ParallelizationDegree>

I have seen large improvements reducing this value from 4 to 1.

Optimize Your Databases

Optimize, optimize, optimize your shard databases!!!

If you are not already using the AzureSQLMaintenance Stored Procedure on your Sitecore databases, do it today!This is critically important for not only your shard databases but also your other Sitecore databases like Core, Master and Web. Marketing Automation and Reference databases also get hammered pretty hard, so make sure that this gets applied and run regularly on these.

Note that this maintenance is 100% necessary. As Grant Killian says: "The expectation that Azure SQL is a 'fully managed solution' is somewhat misleading, as rebuilding query stats and defragmentation are the user’s responsibility."

Sitecore recommends an approach like this: https://techcommunity.microsoft.com/t5/Azure-Database-Support-Blog/Automating-Azure-SQL-DB-index-and-statistics-maintenance-using/ba-p/368974

Schedule an Azure Automation “Runbook” to attend to this after hours for all Sitecore databases.

Run the Rebuild

After all this things have been completed, run the xDB rebuild as Sitecore's docs mentions. In Kudu, go to site\wwwroot\App_data\jobs\continuous\IndexWorker and execute this command:.\XConnectSearchIndexer.exe -rr

After this, you will see the magic start with docs in your inactive / rebuild index first be reset to 0, and then counts start gradually increasing.

Unfortunately, there is no way to see how far along you are. There is however a query that you can run against your Azure Search or Solr indexes to check the status.

Azure Search Query

$filter=id eq 'xdb-rebuild-status'&$select=id,rebuildstate

Solr Query

id:"xdb-rebuild-status"

Returned Index Rebuild Status:

Default = 0

RebuildRequested = 1

Starting = 2

RebuildingExistingData = 3

RebuildingIncomingChanges = 4

Finishing = 5

Finished = 6